Latenz

Serverless skaliert automatisch. Allerdings muss die Latenzzeit der Skalierung vom Ist-Zustand zum Soll-Zustand berücksichtigt werden. Wenn die Serverless Function beim Aufruf nicht bereit ist, tritt das sogenannte «Cold Start» Problem auf. «Cold Start» bedeutet hier, dass es zu einer Latenz zwischen dem Aufruf und der Ausführung einer Function kommt. Dies hat grossen Einfluss auf die User Experience. Gegen «Cold Start» gibt es verschiedene Umgehungslösungen, wie Scheduled Pingers, Retry Approach oder Pre-Warmer. Cloud Provider wie Microsoft Azure bieten z.B. Premium Tier of Azure Function ohne Code-Start-Limitierung an.

Die Container-basierte Lösung hat weniger «Cold Start» Probleme, da sie in der Regel nicht auf null Replicas zurück skaliert wird. Das «Scale Up» hingegen muss in Betracht gezogen werden. Kubernetes skaliert typischerweise entsprechend der CPU- oder Memory-Nutzung. Bei Event-basierten Architekturen reagiert Kubernetes oft zu spät, da die Anzahl der ausstehenden Nachrichten in der Queue keinen Einfluss auf die Skalierung hat. Zu diesem Zweck treibt der Cloud-Provider wie Microsoft Azure aktiv innovative Lösungen wie KEDA [1] voran. KEDA ermöglicht die fein-granulare Auto-Skalierung für Event-getriebene Kubernetes-Workloads.

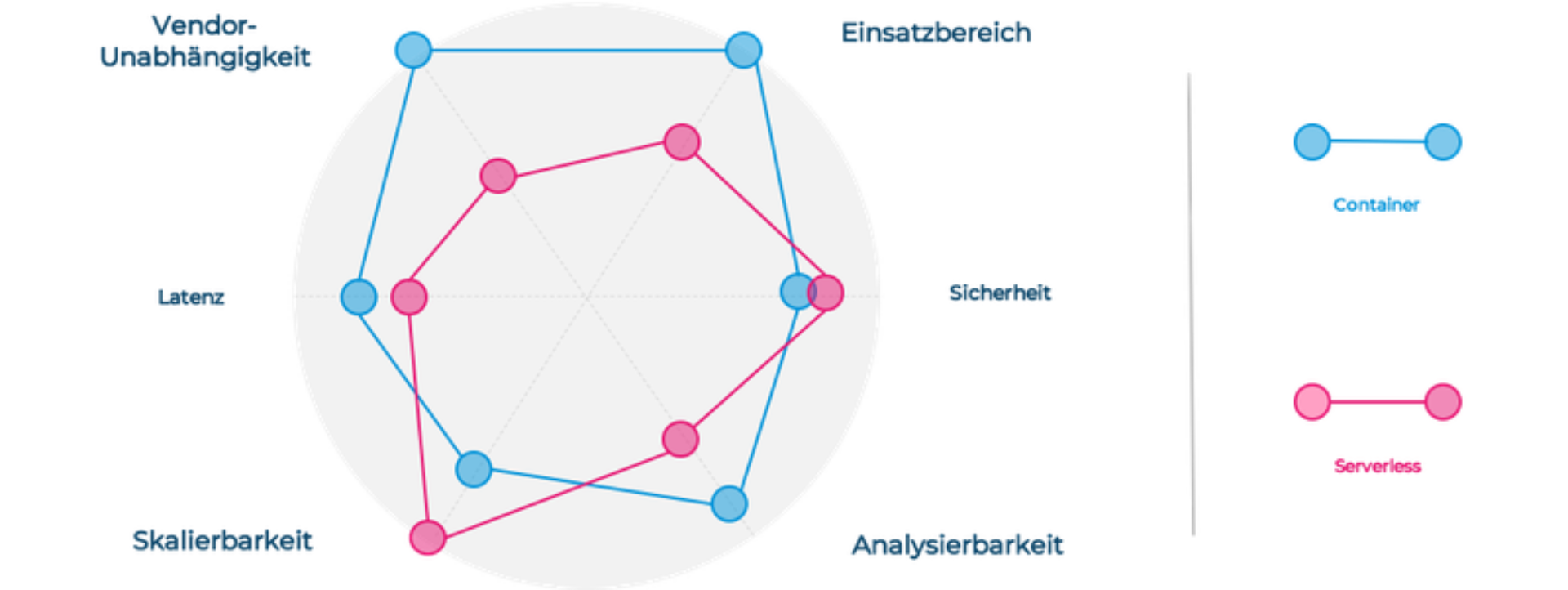

Zusammenfassend gibt es Herausforderungen in Bezug auf die Latenz sowohl bei Serverless als auch bei Containern. Es gibt unserer Meinung nach ausgereiftere Lösungen für Container, als für Serverless. Da ein Vergleich hier schwierig ist, sollte man sich darauf konzentrieren die Herausforderungen zu verstehen und die Lösungen zu einem früheren Zeitpunkt in einem Projekt zu klären.